Last Updated on 07/13/2018 by Mark Beckenbach

It’s pretty cool when AI can automatically “fix” the grain in your photos and also bring back more details

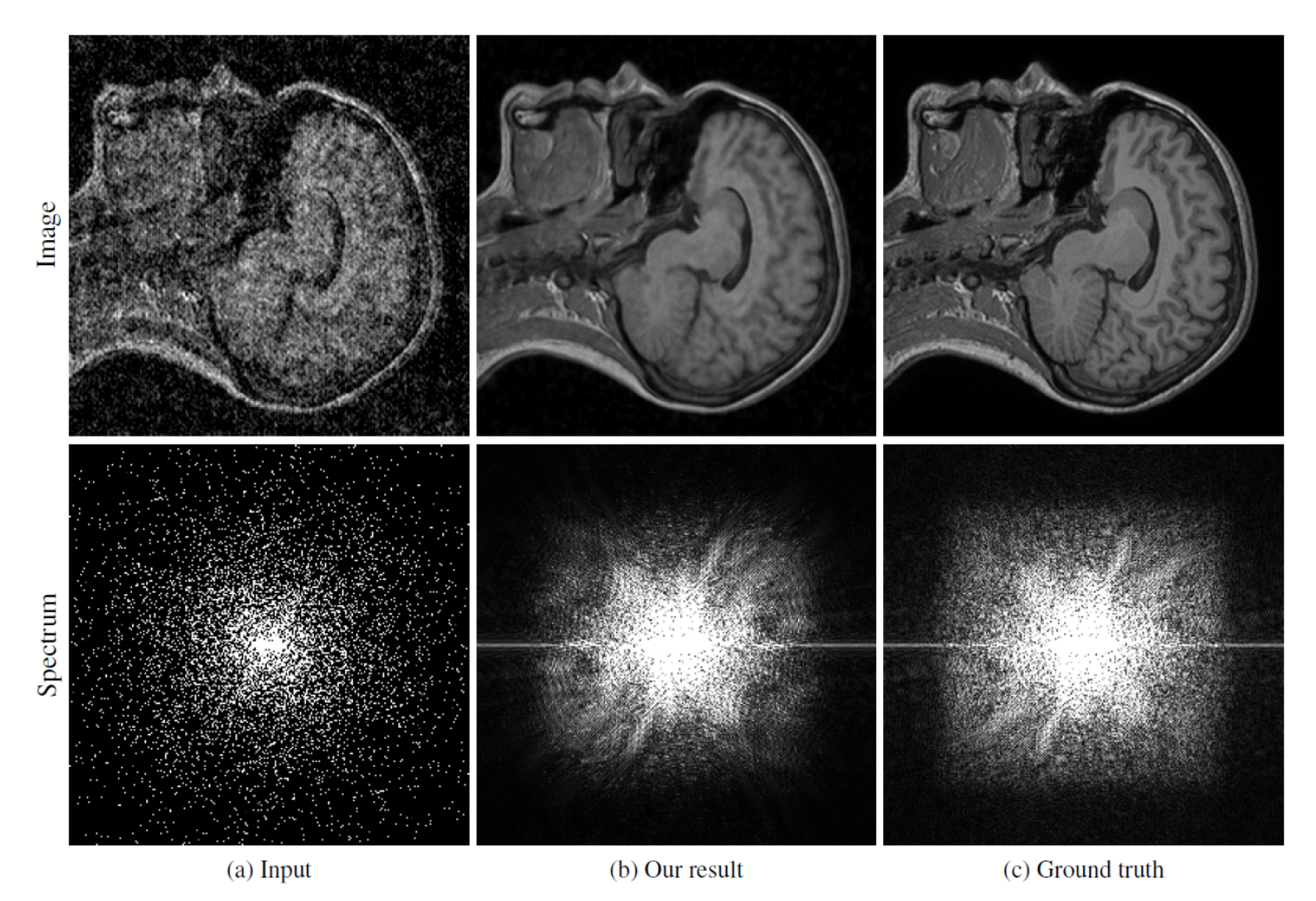

I think many photographers wouldn’t mind software that could fix the image noise in their photos and also bring back more details automatically. But arguably they’d probably want to do it themselves and fine tune it to their exact needs and wants. After all, a little bit of image grain in a photo can add character to the scene. In a recent announcement from NVIDIA, the company teamed up with Aalto University and MIT to create algorithms designed to fix the grain in your images: they’re doing a great job!

To do this, they presented a neural network with images devoid of grain and images that had grain to varying degrees. According to the report, it was taught to do this with only two images that have the grain in it. It was eventually taught to create a completely noise free image without even looking at one. The results are some crazy noise nerfing algorithms. Arguably, in my experience, Skylum has had the best noise nerfing technology while balancing out the details. However, Adobe and Capture One are also no slouches.

NVIDIA, Aalto, and MIT are presenting the research this week. It’s not perfect yet though. According to the blog post:

“There are several real-world situations where obtaining clean training data is difficult: low-light photography (e.g., astronomical imaging), physically-based rendering, and magnetic resonance imaging,” the team said. “Our proof-of-concept demonstrations point the way to significant potential benefits in these applications by removing the need for potentially strenuous collection of clean data. Of course, there is no free lunch – we cannot learn to pick up features that are not there in the input data – but this applies equally to training with clean targets.”

This new AI tech makes us wonder if it will be licensed by companies or if NVIDIA is genuinely interested in getting into the world of photography. If NVIDIA can find a way to go even further and apply this algorithm and AI to an image as it is shot and sent through a processor, then this will be even bigger and potentially game changing. For this to happen, you’d theoretically need to have a connected camera of some sort that would take the image, beam it to their servers, and then spit it back out to your device. I highly doubt this could happen efficiently with a more traditional camera. However, it is a call to camera manufacturers to start creating more connected cameras.

Of course, modern camera technology would also need to find ways to adapt. For example, this could be a major drain on battery life. They’d also need to do more innovation with their apps, and perhaps that could be the solution. Using NVIDIA’s technology to de-noise an image could give it a whole lot more potential with photographers who are ditching the desktop. Beaming the image to your phone, then applying the AI, and then allowing the photographer to send the image right off to a client or social media could save a photographer lots of time.

Nothing is said about the file size of these images or just how big the files are. I’d be interested to see how it does with modern medium format digital files or even those from the Sony a7r III and the Nikon D850.

Similar things have been done. For example, Google created an AI that can automatically colorize images and Canon has a patent to aid with composition.