Last Updated on 03/26/2019 by Mark Beckenbach

Quantum Dots could majorly change your camera sensor in the future, and could make smartphones that much better.

It’s possible very soon that a technology called Quantum Dots could be implemented, significantly improving camera sensors. For photographers, that means higher end cameras with interchangeable lenses could take a quantum leap (pun intended) ahead of the smartphone world. Of course, if and when the technology comes to smartphones, it could also be quite disruptive. Internet speeds and services would need massive compression to make the images displayed on their platforms easily loadable. But more than anything, it’s probably going to start the pixel peeping wars all over again.

No, this is not a reference to the Marvel Cinematic Universe and the upcoming Avengers: Endgame movie. But to understand why this is important, what follows is first an incredibly simplistic description of how digital imaging sensors work and a technology that is about to change the underlying technology of digital image making.

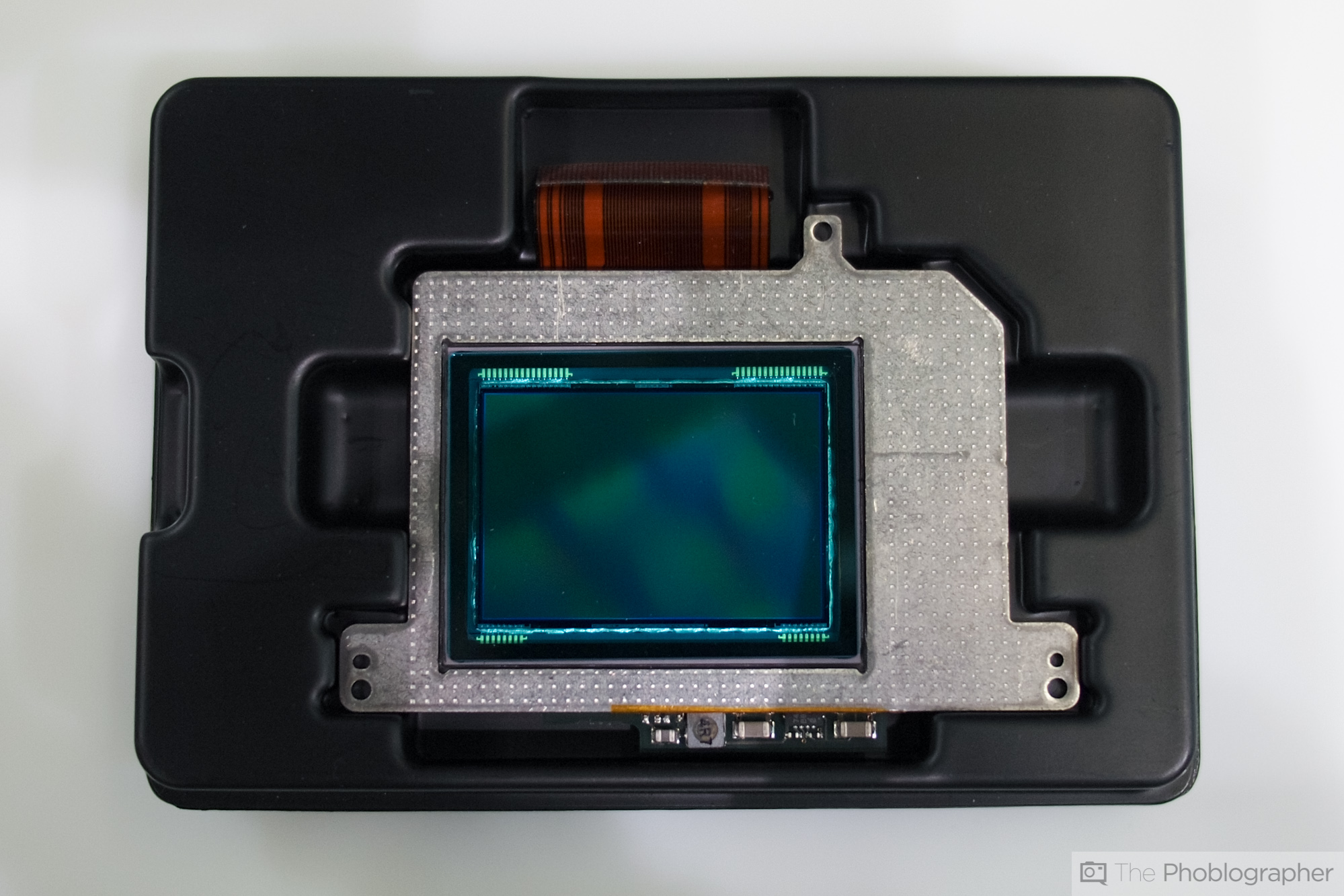

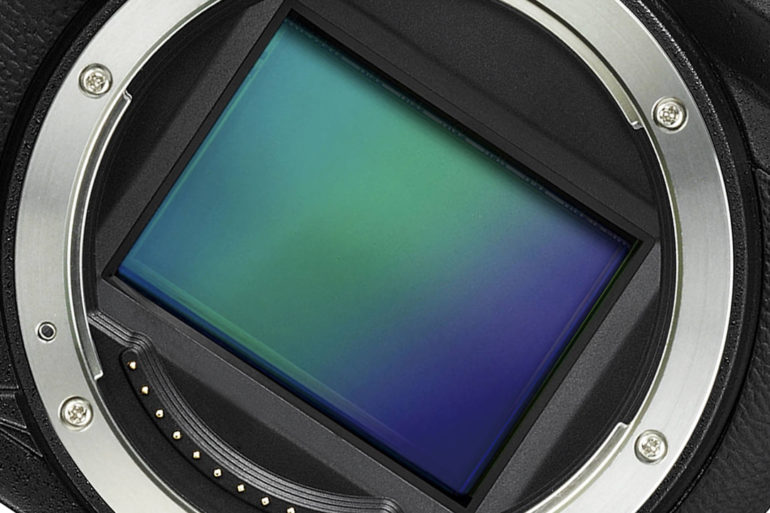

The Science Behind How the Sensor in Your Camera Works

The sensor in your camera is an array of very small millions of CMOS (Complementary Metal Oxide Semi Semiconductor) or CCD (Charge Coupled Device) integrated circuits etched on a silicon wafer. One way to think of a sensor is as a kind of gate for the electrical current running through them. When an atom on the surface of the pixel absorbs a high enough level of electromagnetic energy in the form of light (photons) that atom ejects an electron (technically it is called a photoelectron because it was created by light), temporarily changing the electrical charge of the atom, which in turn changes the amount of the electrical charge running through it. The more light impinging on the CMOS, the greater the change in the electrical charge produced. Although CMOS sensors are very good at what they do, only 1 in 4 photons striking a CMOS actually causes this to happen (25% efficiency). Additionally, because each CCD, or more colloquially, a pixel, is capped by a Red, Green, or Blue filter in either a Bayer or Fovean filtration scheme, it cannot make use of all the light that could potentially strike the CMOS.

The Answer Is in Quantum Dots

Image from the Wikimedia commons.

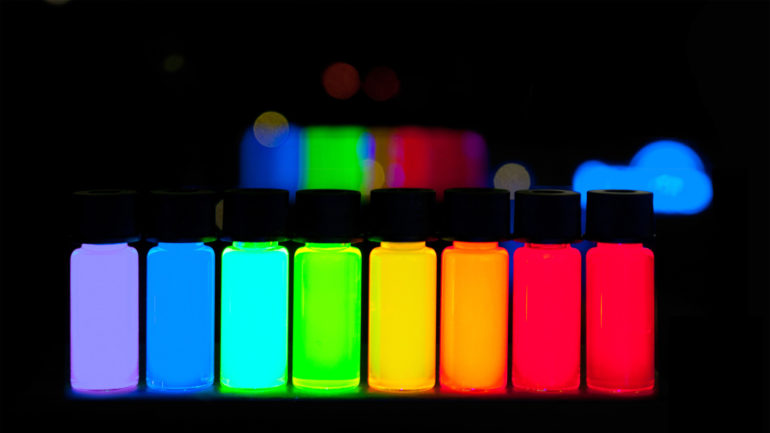

Quantum Dots also work the same way to produce a charge when light strikes but on a much smaller scale and they are far more efficient at turning light into an electrical charge. A single CMOS in a Nikon D850 is 4,350 nanometers (nm) in diameter while a Quantum Dot can be anywhere from 2 to 6nm. (The size of a Quantum Dot size matters, as I’ll explain in a minute.) Just how small is a nanometer? The diameter of an average human hair is around 90,000nm. So instead of millions of CMOS on an imaging wafer there will be billions.

“The more light impinging on the CMOS, the greater the change in the electrical charge produced. Although CMOS sensors are very good at what they do, only 1 in 4 photons striking a CMOS actually causes this to happen (25% efficiency). Additionally, because each CCD, or more colloquially, a pixel, is capped by a Red, Green, or Blue filter in either a Bayer or Fovean filtration scheme, it cannot make use of all the light that could potentially strike the CMOS.”

Standard CMOS designs are inefficient for imaging for two reasons:

- Silicon is a weak absorber of light(1) and about half the surface area of a CMOS is covered by the necessary wiring.

- Backside Illuminated (BSI) CMOS designs (like that in a D850) overcome the second problem by putting the wiring behind the surface, but the fundamental problem of Silicon’s inefficiency at absorbing light remains.

Quantum Dots, on the other hand, are extremely efficient at converting light into an electrical charge and vice versa, which makes the technology a promising replacement for CMOS arrays in cameras and LEDs in monitors. The size of a quantum dot determines its ability to absorb different frequencies of light. Longer wavelengths of light (reds and oranges) need the larger quantum dots, while smaller quantum dots take in the shorter (blue) frequencies.

“Quantum dot technology may allow for the light receptive surface of a sensor to have more of a three dimensional shape and do away with micro-lenses. In this way sensors could start behaving more like the light sensitive crystals in film. “

The science behind Quantum Dots is not new but what enables the commercialization and commodification of the technology is research by a team of professors and graduate students at Stanford University and the University of California Berkeley, led by Professors Alberti Salleo at Stanford and Paul Alivisatos at UC Berkeley. These scientists came up with a new method for checking the quality of vast quantities of Quantum Dots. The more of anything the more chances there are for flaws, but what their research discovered is that a Quantum Dot array is as fault tolerant as the most most perfect semiconductor array made on a single silicon crystal. Quantum Dots are also far cheaper and faster to manufacture, and the manufacturing process potentially can be free of the toxic heavy metals used in CMOS and other IC manufacturing. The goal for the next round of research is to see if Quantum Dots can reach near 100% efficiency. If that is the case, the implications not only for photography but for computer design is huge.

Your Lenses May Start to Suck

Well for starters in the future we might be talking about a camera’s resolving power in terms of terapixels not megapixels. It also potentially means that cameras will use battery power far more efficiently. At risk could be the lens acuity because the difference is in the details and weak sensors will be able to define differences to a much finer degree than currently possible. The sharpness of a lens is judged by how well it can make a distinction between two parallel lines. The limit at which the two lines blur into one is the limit of a lens’s resolution.

According to our interpretation of an article on Photonics, and how the size of a pixel on an imaging chip can be significantly reduced in size without a loss in light sensitivity, there will naturally be pressure on lens manufacturers to increase the ability of a lens to resolve greater detail. But what are the hard limits on resolution imposed by the physics of refracting light through the multiple elements and groups in a complex optical system like a lens?

The Texture of Sensors

Quantum Dot technology may allow for the light receptive surface of a sensor to have more of a three dimensional shape and do away with micro-lenses. In this way, sensors could start behaving more like the light sensitive crystals in film. Also, potentially it means doing away with the pixel pitch sized red, green, or blue filter capping every pixel in current technology pixels. Instead of the input of four adjacent (or with Foveon sensors, stacked) pixels (1 capped with a red filter, 2 with green filters, and 1 with a blue filter) being needed to make a full set of color luminance values for each pixel, a future sensor made up of Quantum Dots will combine small diameter with large QDs, making far more efficient use of the light with a higher signal to noise ratio at all “ISO” settings and with far more precise color interpolation.

For monitors, Quantum Dots are already being used in some televisions, but where I really see potential is in improved EVF and virtual reality environments.

Intro written by Chris Gampat.