Last Updated on 07/31/2013 by Felix Esser

When the first digital cameras (that were actually interesting to consumers) came up in the nineties, the main technology used for their imaging sensors was the CCD technology. In order to be able to record color information, digital imaging sensors were (and still are) typically equipped with a so-called Bayer pattern color filter. With the advance of technology, another type of sensor started to emerge: the CMOS. Today, CMOS sensors have replaced CCD sensors in most types of digital cameras. But besides these two, there are other types of sensors as well–some of which only existed for a short time, or even only as patents. In this article, we want to take a look at the different types of digital imaging sensors, and explain their technological peculiarities.

The CCD Sensor

Until a couple of years ago, when CMOS technology became easier to produce, and CMOS sensors started to improve in terms of the image quality they delivered, the CCD sensor was the gold standard for digital imaging sensors. In fact, many (myself included) still consider the output of a CCD sensor at its base sensitivity superior to that of a CMOS sensor in terms of color reproduction and detail–but that may be a matter of personal preference.

The full name of this sensor technology is ‘charge-coupled device’. The way a CCD sensor works is that electronic charges caused by the light projected onto the sensor are registered at each individual photosite–pretty much the way any sensor works. The charges are then passed along from pixel to pixel for each column of the sensor–through a ‘pipeline’, speaking figuratively–, until they reach a ‘bucket’ that collects them and translates them into a signal the camera’s imaging processor can use to create a picture.

In the early days of digital photography, CCDs had the advantage that they were relatively easy to produce and provided good image quality. However, since they use quite a lot of energy, and heat up pretty quickly, only very small sensors could actually be used to deliver a constant ‘live view’ image to the camera’s rear screen. That’s the reason why this feature came to DSLRs (which typically use larger sensors) only in recent years, with the advance of CMOS sensors.

Notable cameras that use CCD sensors are the Leica M8 and M9, the Pentax K10D, and the early Nikon DSLRs such as the D70.

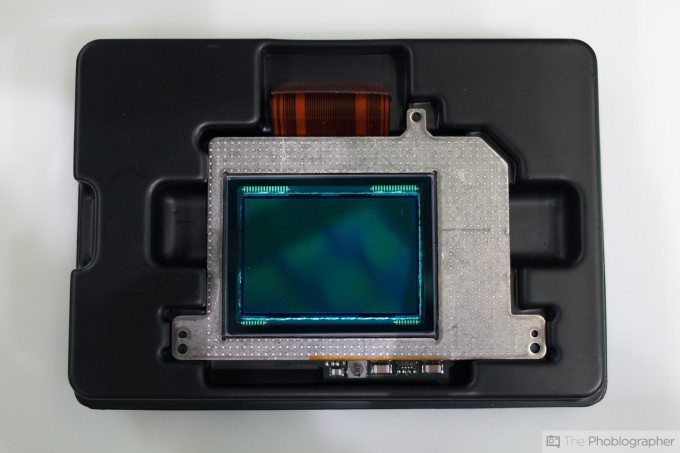

The CMOS Sensor

The CMOS sensor technology–CMOS being short for ‘complementary metal–oxide–semiconductor’–has been around as long as the CCD sensor technology. However, in the early days of digital photography, CMOS sensors were more complicated to produce, and didn’t provide the same image quality as CCDs. Today, the difference between CMOS and CCD is negligible for most intents and purposes.

The main difference between CMOS and CCD sensors is that in a CMOS sensor, the charges are not passed along a column of pixels, but rather each pixel has its own readout unit. On top of this, unlike CCDs that output an analog signal that has to be converted to digital before the camera’s image processor can interpret it, a CMOS sensor outputs a digital signal directly.

CMOS sensors also have lower power consumption than CCDs, and don’t overheat as easily, which makes them especially suited for video recording and cameras with live-view functions (which are pretty much the standard today.) This is why early DSLRs had neither, because they used CCD sensors.

Another advantage that CMOS sensors have over CCDs is that they’re less prone to image noise, especially at higher ISO speeds (i.e. when the outgoing signal is artificially amplified). Today, CMOS sensors are right up there with CCDs, which is why they’re used in most current camera models, even professional ones.

More on the topic of CCD vs. CMOS sensors, and their technological peculiarities and differences, can be found at this link.

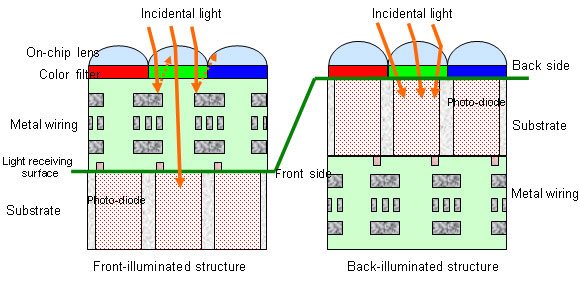

The BSI-CMOS Sensor

A variant of the CMOS sensor is the BSI-CMOS, the ‘back-side illuminated’ CMOS sensor. The main difference between the normal CMOS sensor and the BSI-CMOS sensor is that the former has its circuitry on top of the photosensitive layer, which means that the incoming light is partially blocked before it hits the pixels. BSI-CMOS sensors, which are used in many smatphone and compact cameras today, have the circuitry behind the photosensitive layer. Since their layout is technically inverted, it is as if a regular CMOS sensor were illuminated from behind–hence the designation ‘back-side illuminated’.

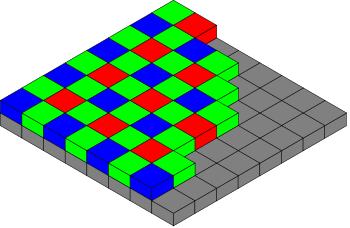

The Bayer Pattern

What both CCDs and CMOS sensors have in common is the typical ‘Bayer’ pattern color filter array that they use in order to read color information. Without it, all a sensor would receive is brightness information, since the photosites themselves cannot distinguish different wavelengths of the visible light. (In fact, this is exactly what monochrome sensors like that in the Leica M Monchrom do: all they register is the intensitiy of the incoming light, but not its color.)

The Bayer pattern, which is named after its inventor, puts two green pixels, one red pixel, and one blue pixel in each square of four pixels, so that each pixel registers one of the three primary colors. During processing, the missing color values for each pixel are interpolated from its neighbors. This technique proved to work so well that it is the main type of color sensor technology used to this day.

The Super-CCD Sensor

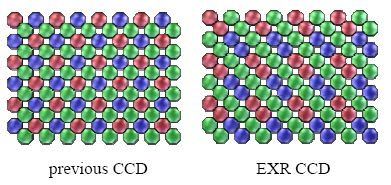

There are other kinds of sensors, though. One is the Super-CCD sensor, which Fujifilm developed in the late nineties and early 2000s. The Super-CCD sensor uses pixels that are octagonally shaped, and that are arranged diagonally (that is at 45°). This way, more and larger pixels can be fit onto the sensor’s surface than with a regular CCD sensor that uses rectangular pixels in vertical and horizontal arrangement.

When it first hit the market, the Super-CCD did indeed provide better image quality than regular CCDs, so Fuji started to improve the technology and develop various variants of it. Some variants of the Super-CCD, namely the ‘SR’ and ‘EXR’ versions, would put two photosites at each pixel location. During image processing, the information of the two individual pixels would then be used to create an image with a higher dynamic range (i.e. higher contrast between black and white levels), or with less image noise.

Notable cameras that use Super-CCD sensors are the Fujifilm S3 Pro, the F30 or the F200EXR.

The X-Trans CMOS Sensor

After Fuji had developed the Super-CCD, they started a similar venture based on CMOS sensor technology. This time, however, instead of giving the sensor a different alignment of the individual pixels, Fuji decided to part with the classic Bayer pattern color filter design, and introduce an entirely new type of color filter, which they dubbed ‘X-Trans’.

The X-Trans sensor uses a color filter array that is repeated not every 2×2 pixels, but every 6×6 pixels. Whereas the Bayer pattern has two green, one red, and one blue pixel per square, the X-Trans pattern has five green, two red, and two blue pixels per 3×3 square. In addition, the alignment of the red and blue pixels changes with every other 3×3 square, so that the final pattern repeats every 6×6 pixels.

The X-Trans sensor uses a color filter array that is repeated not every 2×2 pixels, but every 6×6 pixels. Whereas the Bayer pattern has two green, one red, and one blue pixel per square, the X-Trans pattern has five green, two red, and two blue pixels per 3×3 square. In addition, the alignment of the red and blue pixels changes with every other 3×3 square, so that the final pattern repeats every 6×6 pixels.

This unique color filter layout helps reduce the so-called color moiré, which is an unpleasant effect of false colors along the edges of repeating fine structures such as fibres in a piece of cloth. The effect is caused by the color interpolation algorithm that is needed to calculate the missing color information for each pixel. Another benefit of the X-Trans array is that color noise is less uniform due to the less repetitive filter pattern, which means that resulting images are overall more pleasing to look at.

Notable cameras using this technology are Fuji’s X-series cameras such as the X-Pro 1 and the X100s.

The Foveon Sensor

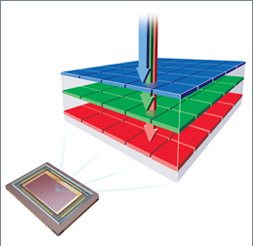

A particularly clever sensor design was invented by a company called ‘Foveon’ in the lates nineties and early 2000s–around the same time as Fuji’s Super-CCD. Unlike common CCD and CMOS sensors that use one layer of photosites and receive color information through a color filter array, the Foveon X3 sensor uses three layers of photosites, each receiving one of the three primary colors. (In that regard, the Foveon sensor is very similar to color film.)

What makes the Foveon sensor technology possible is the fact that different wavelengths of light can penetrate the silicon material that digital sensors are made of to different depths. The Foveon sensor makes use of this peculiarity, and stacks a red-sensitive, green-sensitive and blue-sensitive layer of photosites on top of each other. As a result, at each pixel location each of the three primary colors is recorded, and no interpolation of the color information is needed during image processing.

This has the huge advantage that Foveon sensors deliver images with better and more accurate color reproduction than regular Bayer pattern sensors, and also with more fine detail since there is no loss of information through the filtering of the incoming light. On the downside, until this day the Foveon technology suffers from heavy image noise when the ISO sensitivity is dialed up.

Notable cameras using this sensor technology are the Sigma SD- and DP-series of cameras, although Foveon sensors have occasionally been put in cameras of other manufacturers as well.

Recent Developments

CCD and CMOS sensors are the two main sensor types used today, with CMOS being much more commonplace these days. The latest Foveon sensors are used only in Sigma cameras, and the X-Trans sensor only in Fuji cameras–which means that the majority of digital cameras these days uses CMOS sensors with a Bayer pattern color filter array. Recently, however, a number of very interesting patents for new sensor technologies have been filed, some of which we’re taking a closer look at now.

Color Splitters Instead of Color Filters

Earlier this year, Panasonic showed off a new kind of imaging sensor that would use color-splitting prisms instead of color filters. This technology promises much better light gathering capability, since none of the incoming light would get lost when it passes through the color filters (which, as the name suggests, filter out most of the light wavelengths so that each pixel receives only red, green or blue light, depending on what filter sits in front of it.) Instead, the micro prisms on top of the pixels would split the incoming light into red, green and blue wavelenghts and would pass these on to the surrounding pixels, effectively using almost all of the incoming light.

Clear Pixels Instead of Green Pixels

Aptina, one of today’s main sensor technology developers, recently introduced a new concept for a sensor that uses clear pixels instead of green pixels, in order to capture more of the incoming light. The clear pixels, which register all wavelenghts of the visible light, would help to achieve a brighter image, meaning shorter exposure times and less noise when taking pictures in low light. The missing green color information would be interpolated by comparing the information from the clear pixels with that of the surrounding red and blue pixels.

The Organic Sensor

Panasonic and Fujifilm have recently collaborated on a new type of CMOS sensor that uses an organic material instead of the common silicon for the photosensitive layer. This technology promises to have greater light gathering capability than current sensors, which means shorter exposure times and less noise when photographing in low light. The organic sensor seems to be in the final stages of development, and could actually be implemented in digital cameras in the very near future.

The Graphene Sensor

Graphene is the new super material. It consists of a one atom thick layer of graphite (a mineral made up of carbon atoms), and is a highly versatile material. Recently, researchers started developing an imaging sensor using a layer of graphite as the photosensitive material–instead of silicon, which current sensors are made of. Early studies suggest that a sensor made of graphene could be up to a thousand times more light sensitive than current sensors. In practice, this means that we could soon be able to take properly exposed pictures in near darkness without a flash or a tripod–besides the benefits for scientific scenarios such as space observation.

Please Support The Phoblographer

We love to bring you guys the latest and greatest news and gear related stuff. However, we can’t keep doing that unless we have your continued support. If you would like to purchase any of the items mentioned, please do so by clicking our links first and then purchasing the items as we then get a small portion of the sale to help run the website.