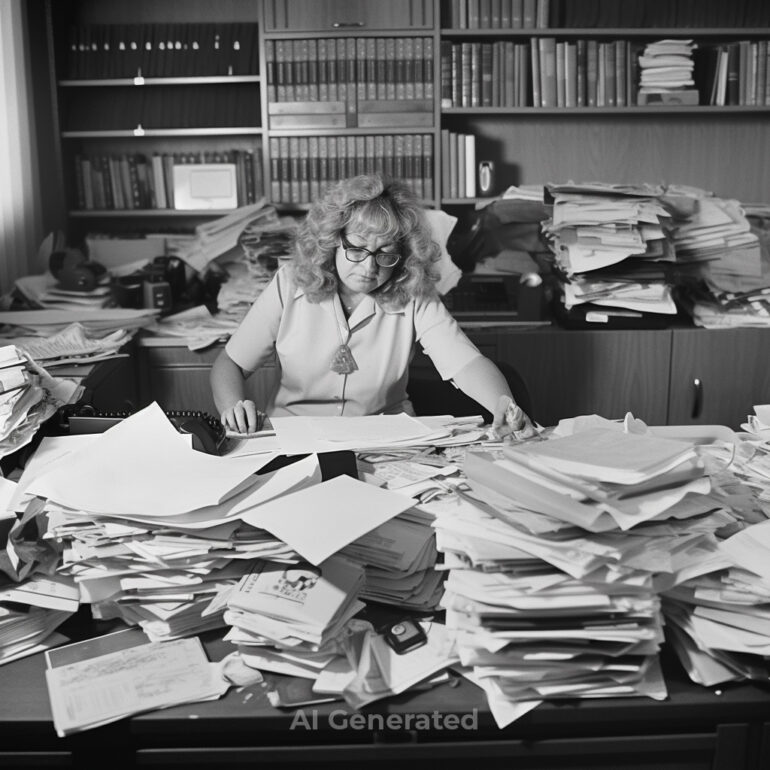

Midjourney v5.1 is out, and it’s becoming increasingly more complex for the untrained eye to distinguish between an AI-generated image and an actual photograph. Let’s move aside the fact that a whole bunch of wannabes thinks they’re going to become the next famous hyperrealistic artist overnight. We’ve seen how AI imagery has fooled people in the past few weeks. Did you think that the Pope was actually wearing an oversized white puffer in public? Or that former US President Donald Trump was actually arrested in public some weeks ago? If you didn’t actually inspect these images close up, chances are that you could have been fooled by them. And while fake imagery has been around for decades, AI generators have made it much easier for pranksters and scammers. Could AI watermarks help solve this raging issue?

Table of Contents

People Are Easily Fooled

A particular family member of mine, who shall remain unnamed, often comes up to me with “news” they’ve received. Often such news is of an inflammatory and incendiary nature. “Did you see those photos? How was such a thing allowed to happen??” he’d say. And as always, I’d ask him where he got those photos from and who sent them to him. Inevitably it’d be from some weird Facebook group or as a Whatsapp forward from someone. But he’d hardly take the time to verify its authenticity before getting aggravated about it. It’s a lot easier to doctor photos with Photoshop. The effort needed to take someone out of, or add them into an image, has dramatically reduced over the last couple of decades. Still, in almost all those instances, you’d need an existing photograph, to begin with to doctor. Not anymore.

It’s All Too Easy To Create Fakes Now

It’s something that’s exploded out of control in the last few months, but it’s been around for a few years. Remember NVIDIA’s GauGAN from 2019? The real-time AI art sensation generated convincing images from just a few brushstrokes back then. Now, hyperrealistic fake images can be generated just by inputting words and sentences into Midjourney. And until last month, people were doing this for free. We even used Midjourney to illustrate the points in our article on AI-generated photos. That wasn’t even using the latest v5.1, which apparently has improved by leaps and bounds over previous versions. It took a couple of trials before I could get it to generate exactly what I wanted. The key was to be highly descriptive to avoid ambiguity for the AI tool.

Misuse of AI Is Becoming Rampant

A spate of misuse and resulting misinformation (yea, like they didn’t expect that to happen) led Midjourney to stop the free usage in April. I guess those earlier-mentioned images were too realistic for most of the public. The person who generated those images of Trump and created a viral thread on Twitter got his Midjourney account banned. I see countless people on Twitter posting images they got Midjourney to make, labeling them as the next big explosion on the art scene. “You can’t even tell it’s not real,” they keep repeating to themselves and their followers, while the experts among us guffaw inside at the flaws.

But that’s really what fakery is all about isn’t it – fooling the gullible? “Wow, it looks so cinematic, just like the style of XYZ film director.” Yea, it is, but it isn’t your work dude. You just typed in some words for the AI to recreate something so that you could post it for likes. An eloquent 8-year-old could easily do better than you with this if he had access to the same tool.

Can A Self Aware AI Do Better?

It’s not quite there yet, and while we don’t want AI to reach the awareness levels of Skynet seen in the Terminator film franchise, it could help curb the issue of fake AI imagery. AI tools are constantly learning and need this to be able to continuously keep improving. How it improves and to what extent it does greatly depends on the data it has access to. I’d be interested to know if and how AI can be trained to only generate such imagery with clear visible watermarks. Not the kind that indicates who the author was of the image. But more so to clearly mark an image as not being a photograph.

Remember the climax scene in the 1987 film Robocop where Directive 4 prevents him from shooting someone? I want that level of integration in AI image tools today, where they absolutely can’t and won’t generate an image based on text prompts without some kind of watermark. It’s impertinent that every AI image-generating tool understands the need to create pictures only with watermarks distinguishing them as AI creations. This can be achieved by incorporating watermarking requirements into the training process of AI models. By doing so, we can ensure that AI-generated images come with visible watermarks that indicate they are not actual photographs. In some ways, AI is, in fact, self-aware because it has been programmed to recognize patterns and learn from its own mistakes.

Steps That Need To Be Taken

Founded in 2019 by Adobe, Twitter, and the New York Times, the Content Authenticity Initiative was set up to counter the spread of disinformation, especially online. In 2021, Adobe co-founded the Coalition for Content Provenance and Authenticity (C2PA) with arm, BBC, Intel, Microsoft, and Truepic. They wish to address the “prevalence of misleading information online through the development of technical standards for certifying the source and history (or provenance) of media content.” In other words, make it easier to identify a fake and/or doctored image from a real one. They have a tool called Verify that analyses images to see what level of changes have been made.

This is important and should run in parallel with the aforementioned AI training. The C2PA principles are great for historical photographs and photos taken with cameras. What’s turning out to be a more significant concern now is fake AI imagery that’s being explicitly created with the idea of masquerading as a real one. More often than not, it’s done by those who want the rush of fame that comes with online virality. Without realizing the consequences that their actions could have, they probably think they can throw their hands in the air and claim innocence.

Soon Is Too Late. It Has To Be Done Now

The onus remains on the creators of the various AI tools that have now flooded the world. They need to recognize the importance of deep learning-based watermarking to embed watermarks directly into the image and image data itself. This is a promising avenue for creating watermarked images that are both visually appealing and highly resistant to unauthorized use and tampering.

The last thing I want is for my relative to come to me again, fuming with rage at another doctored image he was sent. And given the frequency with which even those who have no idea about photography can quickly generate such images, it’s only a matter of time before one such image triggers a real-scale catastrophe.

I know there are those who’d argue that watermarks can be removed too. But that’s adding an extra step in the way of making it harder for those who want to spread fake images. Many might be unaware of such watermark removal tools and might drop the idea of creating fakes even. AI tools that are trained to generate only watermarked images can end up as the starting point for this. It is beyond doubt the need of the hour to train AI in this way for now. The more it’s trained to do this, the better it will become at embedding those watermarks.

All the images seen in this article, including the lead image, are AI-generated by Flickr user Kevin Dooley. Used under the Creative Commons BY 2.0 license. Watermarks have been added for illustrative purposes.