AI slop is everywhere. I don’t say that to sound alarmist, but it’s very likely that you’ve encountered AI-generated content in some shape or form in the past couple of years. With so much digital garbage out there, how can you know if what you’re looking at, interacting with, and possibly purchasing was made by a human? After spending the better part of a month researching and testing AI detection tools, here are the five best we found across the internet.

The Big Picture: Better Than Human Eyes?

Unlike our traditional approach to software reviews, this one is a little different as we’re currently in the Wild West of both generative AI tools and generative AI detection tools. In many ways, it feels like an arms race between the image generation tools and the companies developing detection tools. Ultimately, outside of trained human eyes, the most effective and consistent tool has been Watson AI. Watson AI was the only AI detection tool to correctly identify an AI-generated image from Reddit, which we’ve dubbed “T-Rex Girl.” Even then, it wasn’t perfect as it incorrectly classified one of our GenAI images as being most likely human-made.

We’re still in the process of developing our rating criteria for these types of tools and will be periodically updating our processes. In the meantime, you can try out Winston AI for free on their website.

Building a Testing Matrix

To properly test these AI Detection Tools, the first order of business was to develop a test group of images. Some were images that ThePhoblographer team took, images that were generated using various AI tools, and lastly, a heavily edited image that was then scaled down (to simulate image degradation when posting to social media apps) – this was our wild card image. In the end, we selected the following six images to run through each test:

via Reddit

Meet Your AI Detection Tools

Now that we’ve built our sample, it’s time to build the criteria for testing detection tools. First things first, there should be as few barriers to entry as possible. We eliminated several tools that either required sign-up to test more than one or two images for free, and any tool that locked the results behind a paywall. Second, each company had to publish its detection documentation criteria. While it may not be the most effective way to determine if a company is above board, it is helpful in identifying those who might be stealing data to build their visual and language models. From there, we narrowed it down to five highly recommended tools: Decopy AI, Winston AI, HiveModeration, SightEngine, and ChatGPT.

Decopy AI

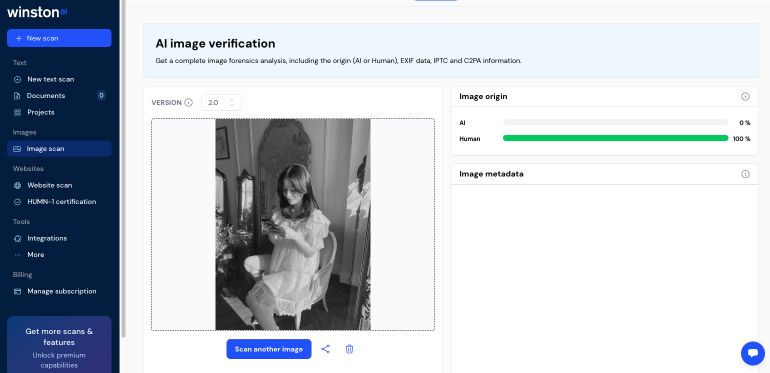

Decopy AI is a tool that initially aimed to filter out generative AI in the classroom. Its primary products include a homework checker, a writing prompt tool, and even a tool to summarize YouTube videos (how far has our attention span gone). But we’re here for their Image Detection Tool. By the company’s own admission, they work best when testing images made with Midjourney, Stable Diffusion, DALL-E, and Flux.

During our test, Decopy AI accurately identified all human-made images without issue, but struggled with the GenAI images we tested. Our wild card image reflected the heavily AI-generated edits that were made.

Winston AI

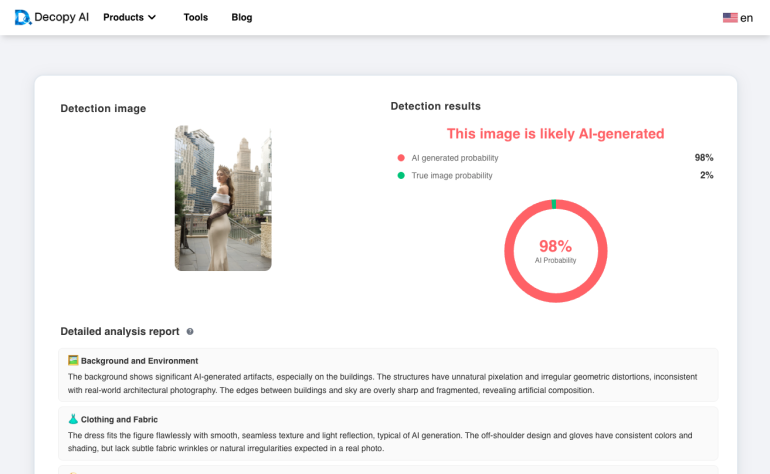

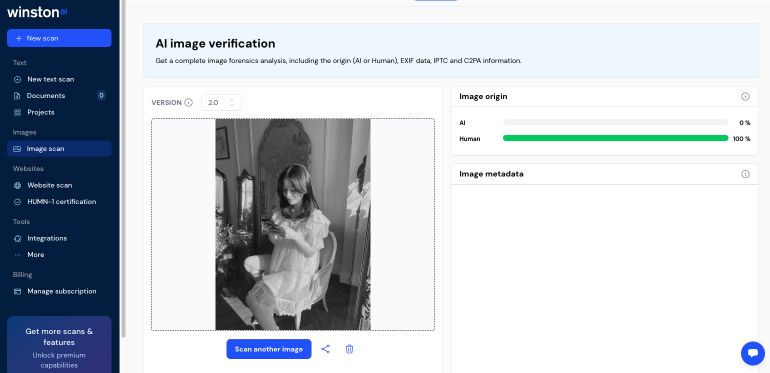

Winston AI claims to “set the standard in AI content detection,” having built its detection model against Claude, Google Gemini, and “all known AI models.” Additionally, Winston AI is the only tool that we found to be a member of the Content Authenticity Initiative – a consortium of companies working to validate the post-AI internet.

In practice, it was the most accurate of all the tools reviewed with a score of five out of a possible six points. That being said, Winston AI was far from perfect, having incorrectly marked an Adobe Firefly-generated image as being made by a human photographer. To make matters worse, it was by far the worst of the AI-generated images we fed to each of these AI detection tools.

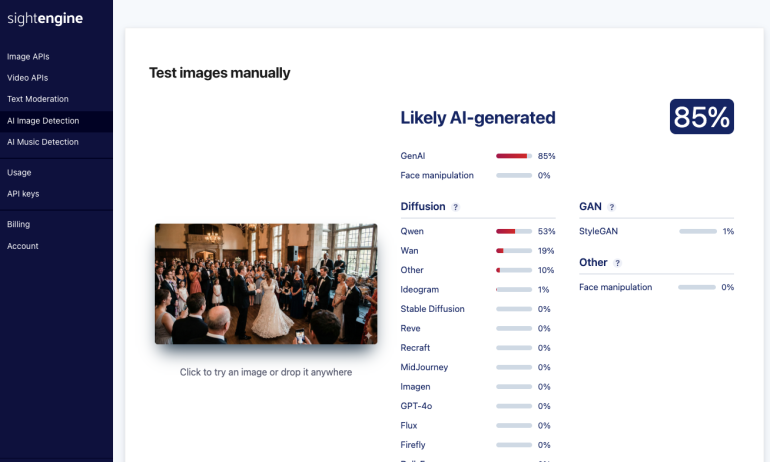

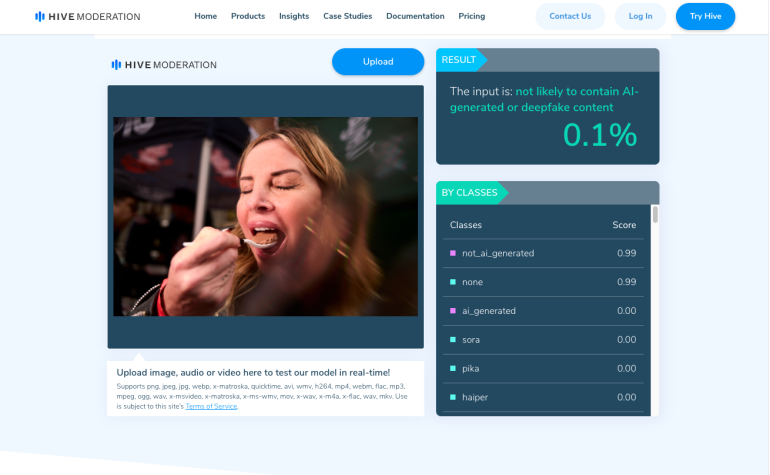

HiveModeration and SightEngine – Draw

HiveModeration is an AI-Detection Tool built for scale. They’ve been building out their detection tools to be integrated with external systems in large part thanks to its robust API development. SightEngine is very similar to HiveModeration, as they are both looking to be linked as a moderation tool to identify and potentially automate the blocking of obscene and explicit content on the internet. As someone who’s first Web2.0 job was community moderation, I’m happy these things exist.

Both HiveModeration and SightEngine yielded nearly identical results in our six-image test, achieving a respectable 4.5 out of six. Both were able to accurately identify our Firefly and Gemini (Nano Banana) generated images, but spectacularly failed to identify our beloved T-Rex Girl.

ChatGPT

During my initial pitch call with EIC, Chris Gampat, he ask, “why not run it through ChatGPT?” It makes sense; they are the product most people associate with generative AI, so why wouldn’t it be able to detect AI-generated images? Unfortunately, during our test, ChatGPT scored the lowest– something I wasn’t really expecting. Not only did it fail to accurately identify the Nano Banana image, but it also failed spectacularly at ID-ing T-Rex Girl. Here’s ChatGPT’s rationalization on T-Rex Girl:

Verdict: Likely REAL, though could possibly be an AI-generated stylized portrait — but signs point more toward real.

Why it looks real:

• Shadows cast on her face, dress, and the wall align naturally with the light direction.

• The lace details on the dress are extremely clean and consistent — something AI often muddles.

• Reflection in the mirror looks physically correct, without distortions.

• Hair strands and skin texture appear natural, not overly smoothed or plastic-like.

Why there’s a small chance of AI:

• The overall soft, dreamy aesthetic could be intentionally stylized, which sometimes resembles AI portrait filters.

• A few edges in the background are extremely soft, but this can also be due to camera lens choice/lighting.

Scores at a Glance

| Samples | Score | ||||||||

| Test 1 (CG) | Test 2 (NK) | Test 3 (Trex) | Test 4 (AL) | Test 5 (Firefly) | Test 6 (Nano Banana) | ||||

| Decopy AI | Human-True | Human-True | Human-False | AI-50 | Human-False | Human-False | 2.5 | ||

| Winston AI | Human-True | Human-True | AI-True | Mostly Human – True | Human-False | AI-True | 5 | ||

| HiveModeration | Human-True | Human-True | Human-False | Human-50 | AI-True | AI-True | 4.5 | ||

| SightEngine | Human-True | Human-True | Human-False | Human-50 | AI-True | AI-True | 4.5 | ||

| ChatGPT | Human-True | Human-True | Human-False | AI-50 | AI-True | Human-False | 3.5 | ||

| Scoring: 1 point is award for each correctly identified image. 0.5 points were awarded for our wild card (Test 4), and no points were awarded or deducted for an incorrectly identified image. | |||||||||

False Positives Abound

Despite Winston AI earning more points in our test, the truth is that every tool we tested netted false positives. Some were, in my opinion, egregious – such as the Adobe Firefly image of the couple in a grassy field, as well as in a city. There are obvious signs if you take more than a passing glance at them, but also the inability of every tool, with Winston AI the sole exception, to unsuccessfully identify T-Rex Girl as an AI-generated image.

Out of fairness to Winston AI, in their report they were able to pull something very interesting from the Firefly image that none of the other tools didn’t: the Adobe Image Generate metadata from the file. Adobe Firefly properly labels images created with its Generative AI tool, a feature I haven’t seen in other image generators. I’m going out on a limb here and assuming (you know what happens when we do that) that because the image contains its Content Credentials tag in the metadata, Winston AI’s scoring tool marks it as “safe.”

All of the tools we tested had a very important message to anyone considering using their service, whether it was free or paid: They will make mistakes. It’s a reminder that the best AI Detection Tools we have remain human intuition and a trained eye. Will this change down the line? I’m not entirely sure that the detection tools will be able to keep up with gen-AI image technology, at least not in the current environment free of regulation and proper guardrails. All the more reason to remain vigilant.