The rapid adoption of generative AI has allowed the technology to bleed over the edges, seeping into multiple areas of modern life at a dizzying pace. Yes, artists can easily avoid opening up ChatGPT. But harder still is avoiding Lightroom’s smart masking tools or Photoshop’s new heal tool. The Google search page you clicked to this article from? It contained AI. The eye detection and subject detection autofocus built into cameras? AI. Machine learning is built into the latest smartphones on both Android and iOS. This article was proofread by the AI-supported Grammarly. As the technology integrates into more areas of daily life, AI ethical issues become more smudged gray than a solid, impenetrable black line.

Boycotting AI would mean restrictions beyond just a few software programs, and, as the technology continues to expand into more areas, both artists and everyday tech users alike need to define where the ethical line is or risk looking back and realizing you stepped over that boundary a long time ago. If putting your name on a novel that AI wrote is wrong, is using Gmail’s new Gemini-based tools to speed up the mundane process of writing an email okay? If passing off a generated image as art is wrong, is using AI-supported tools to remove distractions from the background of a photograph right?

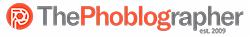

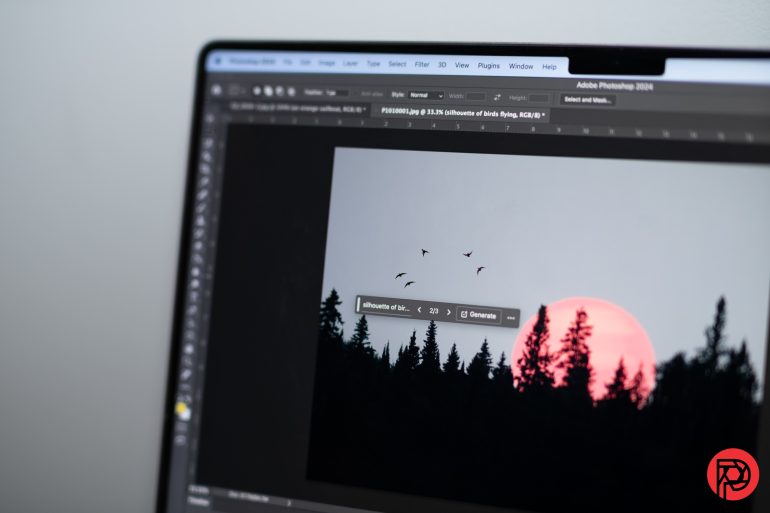

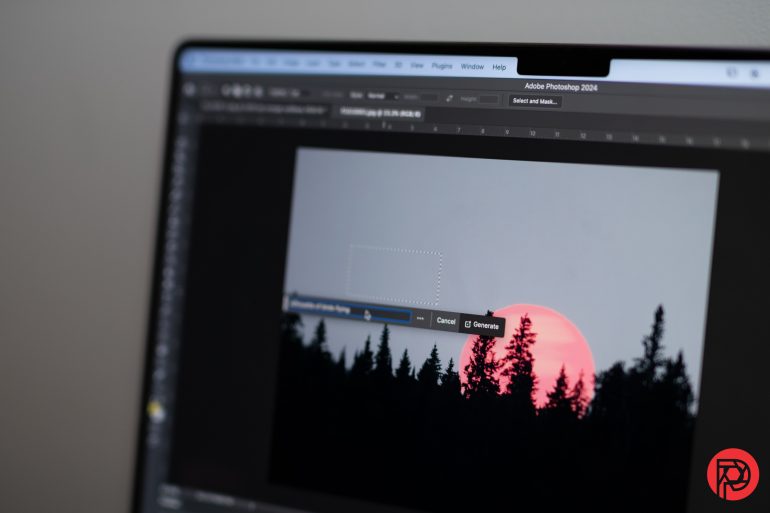

As an artist using both words and cameras as my tools of choice, I’m picking my way through the ethical minefield of the newest technology. I’ve used generative AI in Photoshop to remove a bra strap from a bride’s ill-fitting dress and to remove badly placed hotspots. As a journalist, I’ve tested generative AI from platforms like ChatGPT, Google Gemini, and Microsoft Copilot in order to help readers navigate the technology from the perspective of someone who is both a tech enthusiast and an artist.

The AI ethical issues surrounding the latest advancements need to be discussed at a pace that matches the rapid implementation of machine learning. As generative AI continues to evolve, both artists and tech users need to both understand and navigate these seven ethical issues of generative AI.

1. Using copyrighted images for training

Legislation rarely keeps up with the pace of technology. A key debate among the law and art communities alike is over whether or not using copyrighted images to train AI falls under fair use. A number of lawsuits argue that using protected works to train AI results in software that can inadvertently violate copyright, including a key case filed by Getty after Stability AI-generated images that included a badly replicated watermark from the stock agency. Many AI software companies, meanwhile, argue that AI training would fall under fair use exceptions, like those for education and research.

The issue with the fair use argument is that many of these AI companies are raking in big profits from software trained on artists’ work without compensating the creator or even giving them the option to be excluded. Perhaps the research being done over AI’s potential from institutions like MIT and Harvard could fall under educational fair use, but the issue is harder to argue when the software companies raise $80 million in funding (Stability AI) or fetch an $80 billion dollar valuation (OpenAI).

Not all AI was developed by scraping images from the web, however. For example, Adobe Firefly, the AI model powering many of the new features inside Lightroom and Photoshop, was trained using content from Adobe Stock. According to the company, eligible contributors were compensated if their work was used to train the first commercially released model of Firefly. However, some contributors were frustrated over the terms of use allowing such training and the inability to opt out without pulling images off the stock platform entirely.

2. Replicating another artist

Because generative AI is trained on existing images, the technology has the potential to cross the line from inspiration to blatant copies. This can come in two ways: intentional and unintentional copies.

Some software programs have content guidelines that prevent someone from opening up a chat and asking for an image that looks like it came from Ansel Adams’s lens or Vincent Van Gogh’s brush. For example, if you ask ChatGPT to recreate an image in the style of Richard Avedon, the software will refuse. However, there are loopholes around these restrictions and they aren’t even hard to find. If you ask to replicate a certain artist, ChatGPT will refuse, but then describe that artist’s style and ask if you want an image created using that description. Other platforms have no such restrictions in place, including the new beta of Grok by X, formerly Twitter.

Another potential pitfall is violating copyright without even being asked. Even typing straightforward prompts into a generative chatbot that didn’t ask for a certain style, I’ve received results that included things like logos in the background. When the AI is trained on copyrighted data without licensing the work, the possibility of inadvertently copying an existing work increases exponentially.

3. Reproducing logos and licensed characters

Beyond replicating artwork, some generative AI platforms have been known to recreate logos and licensed characters. Getty has shared a number of examples where AI has generated an image and added the Getty watermark. I asked ChatGPT for an image of a fast-food restaurant and ended up with an image containing iconic golden arches. I have asked X’s new beta for an image of a political debate and received images full of the CNN logo.

These “mistakes” point to further issues with scraping the internet for training data. When bots scrape any image, they grab not just copyrighted work but works that contain trademarks and other intellectual property as well.

4. Creating recognizable pictures of people

The advancement of AI has also led to the advancement of deep fakes. While anyone with some Photoshop skills could spread fake news, fictional political propaganda, or blackmail a woman with an image of her face pasted on someone else’s naked body, with AI, very little tech skills are required. If you can type words into a box, you can create a deep fake.

Some of the larger AI companies are trying to reduce this misuse by programming the bot to refuse to create a picture of a recognizable person. However, many others have no such restrictions. When X (formerly Twitter) launched its beta of Grok, it earned both instant praise and criticism for its ability to generate images of recognizable people, quickly filling the social media platform with generated images of presidential candidates in compromising positions. The technology effectively makes it easy for anyone to create a photo of a politician or celebrity surrounded by drug paraphernalia, cheating on a spouse, or wearing inappropriate clothing.

5. Disclosing whether or not an image was generated by AI

AI ethical issues stretch beyond creating the image itself, extending into how the image is presented. Passing off an image one hundred percent generated by AI as original artwork is plagiarism. But when AI can be used not just to create an image from scratch but to enhance an existing image, the line fades to a foggy gray area that artists will need to use a moral compass to navigate.

Technology is, in fact, attempting to fight against passing off AI-created work as original. For example, the Content Authenticity Initiative, a nonprofit joined by several major companies including Canon, Leica, Nikon, and Adobe, has built a tool that analyzes an image’s metadata to tell someone with little technical know-how if an image was manipulated or created by AI.

This technology would need to be widely adapted, however, to become a key weapon in the war against the misuse of AI. Adobe Photoshop will embed metadata within an image that discloses the use of AI, but not all platforms do so. Another key is making the AI labeling tool easier to use. A good first step forward to this is Facebook’s Made by AI label, which automatically adds the label in any work created by Meta’s AI, but also can label works created in other programs, like Photoshop. Few will take the time to save an image and upload it to another platform in order to find its origins, so having a label directly where the image is shared is a much more practical option.

6. Maintaining image integrity

Not all ethical issues surrounding the use of AI have a clear-cut line. The art community as a whole needs to debate the ethical boundaries of works that were created in part by an artist and in part by an AI. Is a photograph still a photograph if generative AI was used to remove the power lines in the background? Yes, I think so.

But if the background is an original photo and the subject is an AI generation, is it still a photograph? How much AI turns a photograph into a generated graphic instead? These are all questions the art community needs to debate as AI technology evolves.

7. Using proper terminology

The final key to navigating the ethical minefield of generative AI is using the correct words to describe AI-generated content. Is a work created entirely by a computer a photograph? No, but it could be called an image, a graphic, a visual, or simply content. Terms like art, as well as genre-specific nomenclatures like painting and drawing, should be reserved for human-generated works.

There are no technological solutions to giving AI generations the proper term. Rather, the issue is something artists, journalists, and tech users alike would need to consciously put an effort in, putting aside the thesaurus in an effort to use a variety of words and call AI what it is: a generation, a graphic, or content.